Watch the Video Lesson Here: Basic of Neural Networks, clearly explained.

Concept of Neural Networks is not that difficult to understand by an average person irrespective of your area of specialization. In this lesson, we would explain the concept of Neural Networks(NN) or Artificial Neural Networks and then give a formal definition of it. Then we would look at an application of Neural Networks.

Just as you know, we would try to keep it simple and clear so that you will not find it difficult to understand and appreciate the concept. We would cover the following:

- What is Neural Networks

- Application of Neural Networks

- Neural Network Implementation

- Simple Model of Neural Networks – The Perceptron

- Bias and Sidmoid Function

- Final Notes

- A More Detailed Mathematical Model (Next Lesson)

1. What is a Neural Network

An Neural Network is a computing system that is based on the biological neural network that make up the human brain. Neural networks are not based on any specific computer program written for it, but it can progressively learn and improve its performance over time.

A neural network is made up of a collection of units or nodes called neurons. These neurons are connected one to the other by means of a connection called synapse. By means of the synapse, a neuron can transmit signal or information to another neuron nearby. The receiving neuron can receive the signal, process it and signal the next one. The process continues, until an output signal is produced.

2. Application of Neural Networks

Note that Neural Networks are a branch of Artificial Intelligence.So the application areas has to do with systems that that try to mimic human way of doing things. There are many modern application areas of neural networks which includes:

Computer Vision: Since no program can be written to make the computer recognize all the object in existence, the only way is to use neural networks such that as time goes, computer could on its own recognize new things bases on what it has previously learnt.

Patter Recognition/Matching: This could be applied in searching a repository of images to match say, a face with a known face. Used in Criminal investigation

Natural Language Processing: System that allows the computer to recognize spoken human language by learning and listening progressively with time.

3. Neural Network Implementation

So how can we implement an artificial neural network in a real system? The first step would be to have a network of nodes which would represent the neurons. The we assign a real number to each of the neurons. This real numbers would represent the signal held by that neuron.

The output of each neuron is calculated by non-linear function. This function would take the sum of all the inputs of that neuron.

Now, both neurons and synapses usually have a weight that continually adjusts as the learning progresses. This weight controls the strength of the signal the neuron send out across the synapse to the next neuron. Neurons are normally arranged in layers. Different layers may perform different kind of transformation on its input. Signals move through different layers including hidden layers all the way to the output.

4. Simple Model of Neural Networks – The Perceptron

The perceptron is the simplest model of a neuron that illustrates how a neural network works. The perceptron is a machine learning algorithm developed in 1957 by Frank Rosenblatt and first implemented in IBM 704.

|

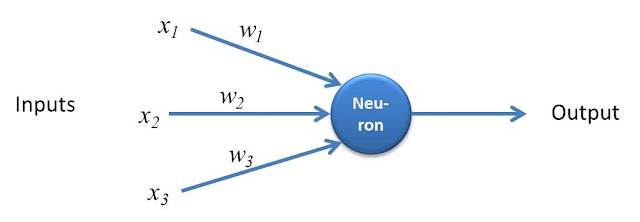

| Figure 1: How the Perceptron Works |

How it Works

How the perceptron works is illustrated in Figure 1. In the example, the perceptron has three inputs x1, x2 and x3 and one output.

The importance of this inputs is determined by the corresponding weights w1, w2 and w3 assigned to this inputs. The output could be a 0 or a 1 depending on the weighted sum of the inputs.

Output is 0 if the sum is below certain threshold or 1 if the output is above certain threshold. This threshold could be a real number and a parameter of the neuron.

This is shown below in Equation 1.

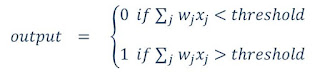

|

| Equation 1: output of a perceptron |

This operation of the perceptron clearly explains the basics of Neural Networks and would serve as a good introduction to learning neural network.

Now we would examine a more detailed model of a neural network, but that would be in part 2 because I need to keep this lesson as simple as possible.

5. Introducing a Bias and Sigmoid Function

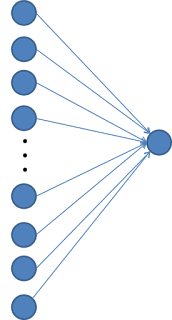

Lets not consider a general example, this time we have not just 3 inputs but n inputs. Just as you know, the formula now becomes:

which is not much different from the one we previously had. But if we use a function like this one, the output could be any number. However, we want the output to be a number between 0 and 1.

So what we would do is to pass this weighted sum into a function that would act on the data to produce values between 0 and 1. What function would that be? Yes, that is the sigmoid function! which is also know as a logistic curve.

For the sigmoid function, very negative inputs gets close to zero and very positive inputs gets close to 1 and it sharply increases at the zero point.

Now, suppose, we want the neuron to activate when the value of this output is greater than some threshold, that is, below this threshold, the neuron does not activate, above the threshold, it activates. Then we call this threshold, bias and include it in the function.

We would then have

The bias is a measure of how high the weighted sum needs to be before the neuron activates.

What we have considered is something like shown above, with just two layers. But Neural networks actually could contain several layers and that is what we are going to consider in subsequent lessons on AI Artificial Intelligence

6. Final Notes

- However complex the Neural Network concept appears, you now have the underlying principle:

- set of inputs combined with weights (plus a bias or error to be discussed in the next lesson) to provide an output.

- Neurons are connected to each other by means of synapses

- Neurons sends signal(output) to the next neuron

- The network undergoes a learning process over time to become more efficient.

In the next lesson, we would look at more details of how the Neural Network works.

[…] How is neural network related to classification and […]

one’s decisions). A neural network accepts inputs, processes them, and produces output, similar to the biological thought. You can add as many layers to a neural network as you want and make it as complex as your problem requires.

[…] Basics of Neural Network […]